Linux 7.5 kernel build and nvidia10/3/2023

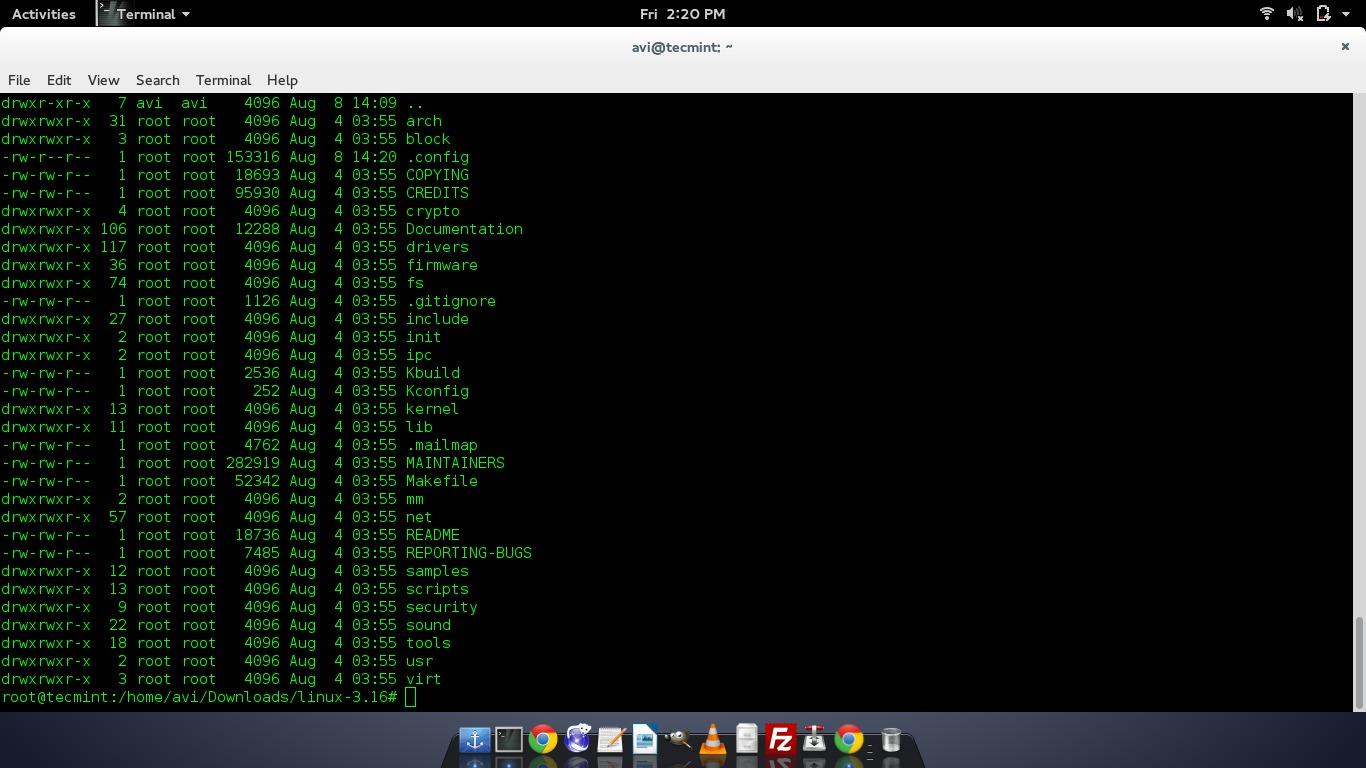

The errors I ran into with the NVIDIA driver (specifically the library libcuda.so.352.63) was that there seemed to be a difference between the version of the library that was installed on the cluster and the version of the library I got from extracting the library without installing the driver (using the -extract-only option). Maybe if you provide me with the full details of your installation (the versions of the packages that you have installed, the OS of your image and host), I might be able to think about something, but my suspicion is that the driver version on your host machine may not be new enough. That actually occurred on our cluster, and after a sysadmin updated the driver, it worked. If you are sure that is not true, it may be possible that the version of the driver that is installed on the machine isn't new enough for the GPU. My first suspicion is that there is still a version mismatch between the drivers installed on the image and on the host. Notice how Python seems to correctly load libcuda.so, yet there is later an error that is unable to find libcuda.so. I feel like your error still has to do with the version of libcuda.so you are using. In my case, yes nvidia-smi as well as TensorFlow both work correctly. If seems like you are doing everything right, yet it is still not working. I don't necessarily have a great answer for you. I tensorflow/core/common_runtime/gpu/gpu_:81] No GPU devices available on machine. I tensorflow/stream_executor/cuda/cuda_:189] kernel reported version is: 346.47.0 I tensorflow/stream_executor/cuda/cuda_:347] driver version file contents: """NVRM version: NVIDIA UNIX x86_64 Kernel Module 346.47 Thu Feb 19 18:56: I tensorflow/stream_executor/cuda/cuda_:185] libcuda reported version is: Not found: was unable to find libcuda.so DSO loaded into this program I tensorflow/stream_executor/cuda/cuda_:160] hostname: midway-l34-01 I tensorflow/stream_executor/cuda/cuda_:153] retrieving CUDA diagnostic information for host: midway-l34-01 I tensorflow/stream_executor/dso_:108] successfully opened CUDA library libcurand.so locallyĮ tensorflow/stream_executor/cuda/cuda_:491] failed call to cuInit: CUDA_ERROR_UNKNOWN I tensorflow/stream_executor/dso_:108] successfully opened CUDA library libcuda.so locally I tensorflow/stream_executor/dso_:108] successfully opened CUDA library libcufft.so locally I tensorflow/stream_executor/dso_:108] successfully opened CUDA library libcudnn.so locally I tensorflow/stream_executor/dso_:108] successfully opened CUDA library libcublas.so locally Type "help", "copyright", "credits" or "license" for more information. Singularity/tensorflow_0.9.img> nvidia-smi Singularity/tensorflow_0.9.img> lspci | grep -i nvidia | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. override in … (or if you want to compile it for the default kernel used by nix you can use but be aware that if you install it on a system running another kernel it will not work) and install the module in the kernel using boot.extraModulePackages =. The safest way to do this is to use to select the correct module set:īoot. Note that if you deviate from the default kernel version, you should also take extra care that extra kernel modules must match the same version. linuxPackages_custom_tinyconfig_kernel pkgs. You can choose your kernel simply by setting the boot.kernelPackages optionįor example by adding this to /etc/nixos/configuration.nix: 8 Patching a single In-tree kernel module.7.2 Developing out-of-tree kernel modules.7.1 Packaging out-of-tree kernel modules.6 Booting a kernel from a custom source.5 Requesting a change in the default nixos kernel configuration.2 Embedded Linux Cross-compile xconfig and menuconfig.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed